"Intel’s Arc A770 and A750 were decent at launch, but over the past few months, they’ve started to look like some of the best graphics cards you can buy if you’re on a budget. Disappointing generational improvements from AMD and Nvidia, combined with high prices, have made it hard to find a decent GPU around $200 to $300 — and Intel’s GPUs have silently filled that gap.

They don’t deliver flagship performance, and in some cases, they’re just straight-up worse than the competition at the same price. But Intel has clearly been improving the Arc A770 and A750, and although small driver improvements don’t always make a splash, they’re starting to add up."

@nanoUFO Honestly, I am considering picking one up for Starfield, depending on how they perform there. I’m sure their Linux support isn’t incredible, but Nvidia also has a lot of issues on Linux and I’ve been running my 1060 for years now.

Have you considered upgrading to an eBay 1080ti? Still a bulletproof card depending on your application, unless you’re trying to get that sweet sweet AV1 encoding.

I don’t think realtime AV1 encoding is really going to be necessary for quite awhile tbh. A Ryzen i3 should be able to hit around 10fps on software AV1 encoders and get like 5x the quality. Otherwise x264 on medium is blazing fast and way better than what hardware h.265 will get you.

I really want an energy efficient variant next time around. I currently have a 1050Ti and when i upgrade i sort of want something that’s relatively better but with less wattage if possible.

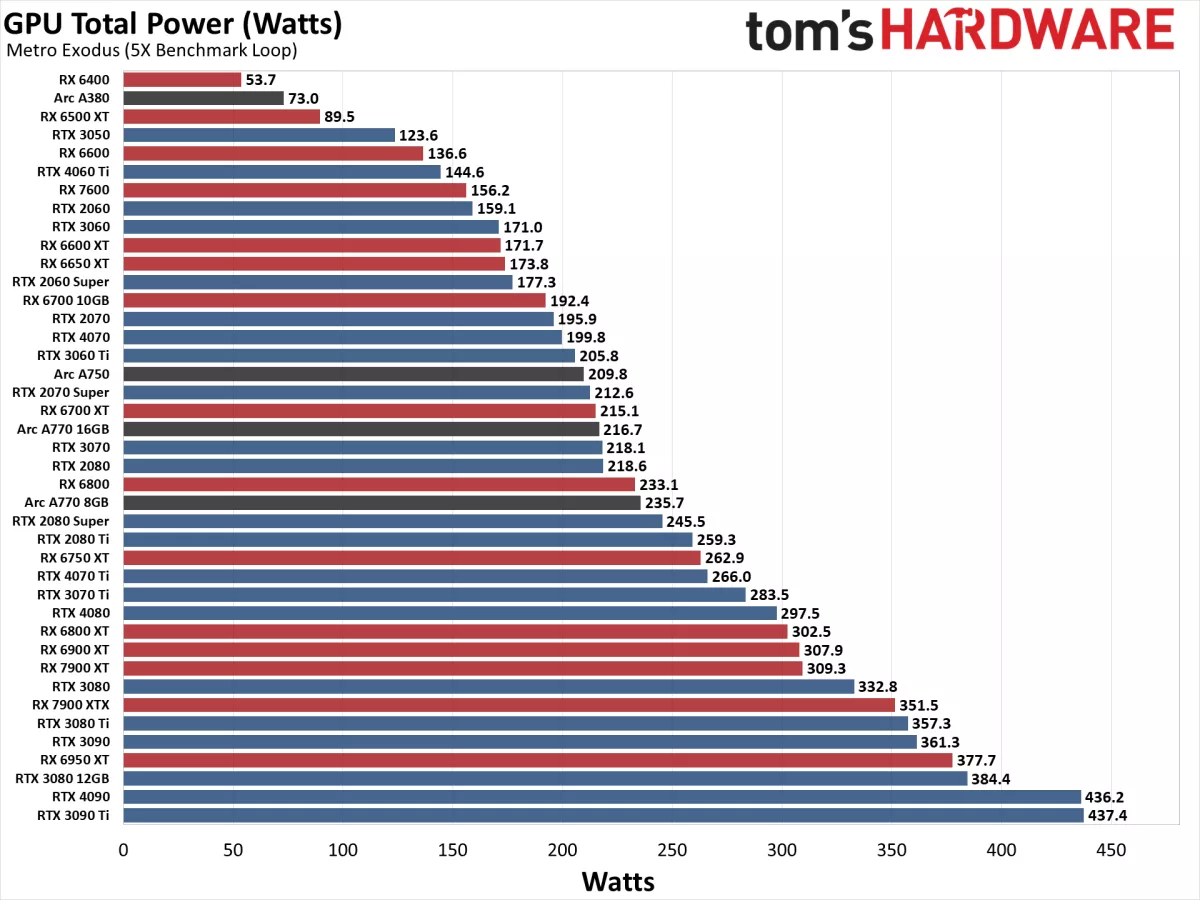

This article has a graph of GPU total power (Watts) https://www.tomshardware.com/reviews/gpu-hierarchy,4388.html

I would be interesting to see a ratio of Frames/Watts for power efficiency.

Thanks for that, that’s good info. It’s good to know the arc gpus are trending towards the bottom half of the graph. It would be very cool to see a frames/watts graph like.

I agree. I feel like the low wattage cards have become thing of the past. Everything is so power hungry now.

OneAPI also looks quite juicy… from someone currently suffering under ROCm and in refusal to give the green goblin any business.

If you can bear the terrible drivers, consider a used nvidia card. They can be decent deals for gaming as well.

What happened to the drivers for the old cards to make them bad?

Crashes, broken adaptive sync, general display problems and, most importantly, stutter. I’m running a version from about a year ago on my 1070 Ti because every time I try to update, some game starts to stutter and I get to use DDU and try multiple versions until I find one that doesn’t have that problem.

About 2-3 weeks ago, an update also worsened LLM performance by a lot on 30 and 40 series cards. There were a lot of reports on Reddit, not sure if they fixed it yet.

My default advice for any issue on r/techsupport that could be nvidia driver related has been to DDU and install a version from 3-6 months ago and that has worked shockingly well.

That reminds me, have the r/techsupport mods migrated to lemmy yet? Their explanation of the whole reddit issue was great, so I don’t think they’ll want to stay on there.

Anyways, back to the topic. Since OP also mentioned ROCm, I’m assuming he uses Linux for that. The nvidia drivers on linux are pretty much unusable because of all the glitches and instabilities they cause. Nvidia is a giant meme in the linux community because of this.

This is so the way. Using a used Tesla P40 in a Linux server for AI stuff. Card goes hard.

can you use it for Cuda applications?

No. Their may exist some workarounds to make it work but directly it won’t work.

I’ve got a Vega 64 in my HTPC, and it can run Diablo 4 okay at 4k, but would like to upgrade. It’s hard to find a comparison, but does anyone know how an Arc A750/770 compares to a Vega 64?

Hope this helps.

https://versus.com/en/intel-arc-a770-16gb-vs-msi-radeon-rx-vega-64

My main concern is if they will keep up the support, I don’t want to go buy something that looses driver support in a year or two. I feel like I have seen too much of that from various companies, though i can’t think of too many examples from intel other than Optane.

I don’t know about windows, but on linux it’s only NVIDIA gpu that can loose driver support.

I welcome any additional competition in the graphics card market.

AMD and NVIDIA have gotten entirely too comfortable.

I gave the A770 a try a few months ago and ended up returning it after a few days. I’d be open to trying it again down the road.

Did you give it a spin in Linux? That’s the main use case that sort of has my interest.

Yep. Tried it on Trisquel to see if it would work with the libre kernel which it didn’t. Next I tried it on Garuda and on several games I couldn’t even get them to launch and on others it performed at par or worse than my RX 590.

Ah, that’s unfortunate! Thanks for sharing your experience. I’ve really enjoyed the maturity of AMD on Linux these days with a few cards.

To play devil’s advocate this was a few months ago so perhaps Mesa has improved since then with the A770.

shhh prices are going to rise!

It was extremely close between the 6700xt and the A770 for me when I was picking my GPU for my virtualized windows gaming PC. The only reason I went with the 6700xt was because I knew it’d work. I think if Intel sticks this out and actually releases some new cards, they could easily become a decent competitor to AMD and Nvidia for cards the majority of people actually buy. I know in myself part of the reason I didn’t pick Intel this time around was fear that they’d just give up and leave me with an abandoned card.

Glad to see more competition in the GPU market, but the Arc cards are still not cheap enough to really take away much market share.

Userbenchmark still has the 3060ti +16% over the a770 and on the market currently the 3060ti is cheaper than the a770.

https://gpu.userbenchmark.com/Compare/Intel-Arc-A770-vs-Nvidia-RTX-3060-Ti/m1850973vs4090

I don’t know much about the performance of the a770 so I don’t really know if that’s right, but I wouldn’t trust userbenchmark at all. they favor Nvidia massively and their ratings are super inaccurate. here’s an article on some of it: https://www.gizmosphere.org/stop-using-userbenchmark/

I don’t trust anyone saying userbenchmark is biased without their own set of information to back up their claim. Using reddit drama as an excuse to not use a tool is weak.

The article you posted claims this:

“However, consider this: UserBenchmark mentions the NVIDIA GeForce GTX 1660 Super in their GPU section as faster than the Radeon RX 5600 XT.”

This claim in the article is factually incorrect at the current time.

https://gpu.userbenchmark.com/Compare/Nvidia-GTX-1660S-Super-vs-AMD-RX-5600-XT/4056vs4062

Sounds unfair to criticize an article from 2021 for not being up to date with the ever changing metrics of Userbenchmark.

The point stands. Userbenchmark has announced and made changes to their own metric calculations because Ryzen Cpus were getting better scores than intel. It has a very clear anti-AMD stance that is clear on the written reviews and linked videos like the one in your screenshot.

Just from this comparison, I have no clue how Userbenchmark achieved the 8% given the values they’re posting below. 8% is still also reasonably below what other reviewers posted at the time. https://www.techpowerup.com/review/sapphire-radeon-rx-5600-xt-pulse/27.html https://www.techspot.com/review/1974-amd-radeon-rx-5600-xt/

This just to say that they are pretty clearly biased and do not shy away from altering their metrics to favour one product over the other.

Sounds unfair to criticize an article from 2021 for not being up to date

An article from 2021 which hasn’t been updated is by definition not up to date. Neither of the articles you posted even have the 3060ti on the list. Nothing you wrote actually debunks my refutation.

You attacked the credibility of the article just for having some out of date information. Just because something they reference is out of date, does not mean the article is less relevant. It’s still things that happened and they should weight on how you view Userbenchmark as a source of information.

I am replying to your userbenchmark defense. Of course the articles I posted have nothing to do with the 3060ti, they were meant to source my claim that even the 8% on your posted screenshot doesn’t seem like an accurate evaluation when comparing these 2 GPUs

Just because something they reference is out of date, does not mean the article is less relevant.

Yes it does.