- 2 Posts

- 11 Comments

6·1 year ago

6·1 year agoMy own thoughts.

-

Instead of defining the difference between logging and tracing, the author spams the screen with pages’ worth of examples of why logging is bad, then jumps into tracing by immediately referencing code that uses a specific tracing library (OpenTelemetry Tracer) without at any point explaining what that code is actually doing to someone who is not familiar with it already. To me this smacks of preaching to the choir since if you’re already familiar with this tool, you’re likely already a) familiar with what “tracing” is compared to “logging”, and b) probably a tracing advocate to begin with. If you want to persuade an undecided or unfamiliar audience, confusing them and/or making assumptions about what they know or don’t know is … suboptimal.

-

If you’re going to screen dump your code in your rant, FUCKING COMMENT IT YOU GIT! I don’t want to have to read through 100 lines of code in an unfamiliar language written to an unfamiliar architecture to find the three (!) lines that are actually on the fucking topic!

-

If you’re going to show changes in your code, put before/after snapshots side by side so I don’t have to go scrolling back to the uncommented hundred-line blob to see what changed. It’s not that hard. Using his own damned example from “Step 1”:

// BEFORE func PrepareContainer(ctx context.Context, container ContainerContext, locales []string, dryRun bool, allLocalesRequired bool) (*StatusResult, error) { logger.Info(`Filling home page template`)// AFTER var tr = otel.Tracer("container_api") func PrepareContainer(ctx context.Context, container ContainerContext, locales []string, dryRun bool, allLocalesRequired bool) (*StatusResult, error) { ctx, span := tr.Start(ctx, "prepare_container") defer span.End()(And while you’re at it, how 'bout explaining the fucking code you wrote? How hard is it to add a line explaining what that

defer span.End()nonsense is? Remember, you’re trying to sell people on the need for tracing. If they already know what you’re talking about you’re preaching to the choir, son.)Of course in “The Result” he talks about the diff between the two functions … but doesn’t actually provide that diff. Instead he provides another hundred-line blob kept far away from the original so you have to bounce back and forth between them to spot the differences. Side-by-side diffs are a thing and there’s plenty of tools that make supplying them trivial. Maybe the author should think about using them.

- The technique this guy is espousing, if I’m reading it right, sounds fine but only in limited realms. This would kill development in my realm (small embedded systems), for example. If you have (effectively, from my domain’s perspective) infinite RAM, CPU, persistent storage, and bandwidth, then yes, this is likely a very good technique. (I can’t be certain, of course, because he hasn’t actually explained anything, just blasted uncommented code while referencing a library he assumes we know about. The only reason I followed any of it is because I’m familiar with Erlang’s tooling for this kind of stuff which puts what he’s showing off to shame.) But if your RAM is limited (hint: measured in 2-digit KB and shared by your stack(s), heap, and static memory), if your CPU is a blazing-fast 80MHz, and if you think 1MB of persistent storage (which your program binary has to share) is a true bucket of gold in wealth, and, yes, if you’re transmitting over a communications link that would have '80s-era modem jockies looking on you with pity, then maybe, just maybe, tracing isn’t so great an idea after all.

-

2·1 year ago

2·1 year agoPoints for having a good sense of humour about it!

16·1 year ago

16·1 year agoStep one: learn the name of the language?

2·1 year ago

2·1 year agoSloppiness is rarely contained in one narrow area. If programmers are so sloppy they’re using up 1.5-2GB to do literally nothing, then they’re sloppy in how they do pretty much everything.

My very first computer had all my friends exceedingly jealous. I had 208KB of RAM see. (No, that’s not a typo. KB.) I could run four simultaneous users, each user having 48KB of RAM with a staggering 16KB available for the operating system. I had available spreadsheet and word processing applications, as well as, naturally, development applications. (I also had a secret weapon that drove my friends crazy: while they were swapping around their floppy disks holding 160KB of data each, I had two of those … and I had a 5MB hard disk!

I compare those days to now and I have to laugh. My current computer (which is considered pretty weak by modern standards since I don’t game so I don’t give a fuck about GPUs and ten billion cores and clock rates in the Petahertz range) is almost four orders of magnitude faster than that first computer at the CPU level. I have six orders of magnitude more disk space and the disks are at least 3 orders of magnitude faster (possibly more: I don’t have the stats for the old drive on hand.) I have five orders of magnitude more memory and it, again, is probably 3 orders of magnitude or more faster.

Yet …

I don’t feel anywhere from 3 to 6 orders of magnitude more productive. Indeed I’d be surprised if I went about an order of magnitude better in terms of productivity with this (barely) modern machine than I had with my first machine. Things look prettier (by far!) I’ll admit that, but in terms of actually getting anything done, these thousands, to millions of times more resources are basically 100% wasted. And they’re wasted precisely because every time we increase our ability to do something in a computer by a factor of 10, sloppy- and lazy-assed programmers increase the resources they use to get things done by a factor of 11.

And it shows.

That old computer? Slow as it was, it booted from nothing to full, multi-user functionality in 10 seconds. 10 seconds after turning the power on, I could have up to four users running their software (or in my case one user with four screens) without a care in the world. Loading the word processor or spreadsheet was practically instantaneous. One, maybe two seconds. It didn’t register as a wait. I just timed loading LibreOffice Writer on this modern system that runs four orders of magnitude faster. Six seconds, give or take. Which not even a slow-loading program! (Web browsers take significantly longer….)

And you know what problem I never once faced on that old computer running under a ten-thousandth (!) the speed? Typing faster than the system could keep up while displaying text. Yet as I type this I’m consistently typing one or two characters faster than the web browser can update plain text in a box. More than a ten thousand times faster!

So yes, yes it matters. When a system made likely before you were even born is running circles around a modern system in key pieces of functionality (albeit looking far, far, far prettier while doing it!), there’s something that’s going horribly wrong in software. And shit like VS Code is almost the Platonic Form of what’s wrong with software today: bloated, slow, and so overpacked with features it has no elegance in functionality or implementation.

But hey, if you want to waste 2GB of RAM to load a 12KB text file, more power to you! I’ll stick with a system that only wastes 30MB of RAM to do the same, and runs faster, and looks cleaner, and is easier to extend. (And since its total code, including the C code and the Lua extensions, is something you can peruse and understand out of the box in a weekend, it’s also less buggy than VS Code: that’s the other cost of bloat, after all. Bugs.)

1·1 year ago

1·1 year agoThe thing that made me go to it was its syntax highlighting engine. I tend to use languages that aren’t well-supported by common tools (like VSCode), and Textadept makes it simple. REALLY simple.

As an example, I supplied the lexer for Prolog and Logtalk. Prolog is a large language and very fractious: there’s MANY dialects with some rather sizable differences. The lexer I supplied for Prolog is ~350 lines long and supplies full, detailed syntax highlighting for three (very) different dialects of Prolog: ISO, GNU, and SWI. (I’ll be adding Trealla in my Copious Free Time™ Real Soon Now™. On average it will be about another 100 lines for any decently-sized dialect.) I’ve never encountered another syntax highlighting system that can do what I did in Textadept in anywhere near that small a number of lines. If they can do them at all (most can’t cope with the dialect issue), they do them in ways that are painful and error-prone.

And then there’s Logtalk.

Logtalk is a cap language. It’s a declarative OOP language that uses various Prologs as a back-end. Which is to say there is, in effect, a dialect of Logtalk for each dialect of Prolog. So given that Logtalk is a superset of Prolog you’d expect the lexer file for it to be as big or bigger, right?

Nope.

64 lines.

The Logtalk support—a superset of Prolog—adds only 64 lines. And it’s not like it copies the Prolog support and adds 64 lines for a total of ~420 in the file. The Logtalk lexer file is 64 lines long. Because Textadept lexers can inherit from other lexers and extend them, OOP-style. This has several implications.

- It seriously reduced the number of lines of code to comprehend.

- It made adding support for another backend dialect automatic. Originally I wrote the Prolog support for just SWI-Prolog and then wrote Logtalk in terms of the Prolog support. When I later added dialect support, adding ISO Prolog and GProlog, to the Prolog support file, Logtalk automatically got those pieces of dialect support added without any changes.

In addition to this inheritance mechanism, take a look at this beautiful bit of bounty from the HTML lexer:

-- Embedded JavaScript (). local js = lexer.load('javascript') local script_tag = word_match('script', true) local js_start_rule = #('<' * script_tag * ('>' + P(function(input, index) if input:find('^%s+type%s*=%s*(["\'])text/javascript%1', index) then return true end end))) * lex.embed_start_tag local js_end_rule = #('') * lex.embed_end_tag lex:embed(js, js_start_rule, js_end_rule) -- Embedded CoffeeScript (). local cs = lexer.load('coffeescript') script_tag = word_match('script', true) local cs_start_rule = #('<' * script_tag * P(function(input, index) if input:find('^[^>]+type%s*=%s*(["\'])text/coffeescript%1', index) then return true end end)) * lex.embed_start_tag local cs_end_rule = #('') * lex.embed_end_tag lex:embed(cs, cs_start_rule, cs_end_rule)You can embed other languages into a top level language. The code you read here will look for the tags that start JavaScript or CoffeeScript and, upon finding them, will process the Java/CoffeeScript with their own independent lexer before coming back to the HTML lexer. (Similar code is in the HTML lexer for CSS.)

Similarly, for those unfortunate enough to have to work with JSP, the JSP lexer will invoke the Java lexer for embedded Java code, with similar benefits for when Java changes. Even more fun: the JSP lexer just extends the HTML lexer with that embedding. So when you edit JSP code at any given point you can be in the HTML lexer, the CSS lexer, the JavaScript lexer, the CoffeeScript lexer, or the Java Lexer and you don’t know or care why or when. It’s completely seamless, and any changes to any of the individual lexers are automatically reflected in any of the lexers that inherit from or embed another lexer.

Out of the box, Textadept comes with lexer support for ~150 languages of which ~25 use inheritance and/or embedding giving it some pretty spiffy flexibility. And yet this is only ~15,000 LOC total, or an average of 100 lines of code per language. Again, nothing else I’ve seen comes close (and that doesn’t even address how much EASIER writing a Textadept lexer is than any other system I’ve ever seen).

5·1 year ago

5·1 year agoDoing nothing from the problem domain. I mean I could make a “hello world” program that occupies 16TB of RAM because it does weird crap before printing the message.

The problem domain is text editing. An idle text editor (not even displaying text!) is “doing nothing”. The fact it was programmed by someone to occupy multiple GB of space to do that nothing is bad programming.

12·1 year ago

12·1 year agoI use Textadept over any of the named ones, including VS Code. (I use the vi subset of Vim for remote access to machines I haven’t yet installed Textadept on.)

Why?

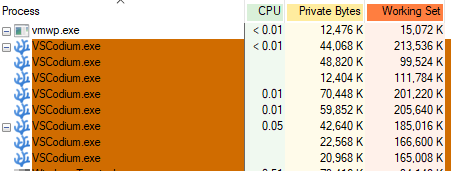

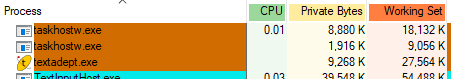

I have a moral objection to … Well let me show you:

One of those is VSCode (well, VSCodium, which is VSCode without Microsoft’s spyware installed), and the other is Textadept. One of those is occupying almost 30MB of memory to do nothing. The other is occupying about 1.5-2GB of memory … to do nothing. In both cases there are no files open and no compilers being run, etc. I find this kind of intense wastefulness a sign of garbage software and I try not to use garbage software. (Unfortunately I’m required to use garbage Windows 10 at work. 😒)

Oh, and Textadapt has both a GUI version (and not the shit GUI that Emacs provides in its GUI version!) and a console version, allowing me to quickly get it running even on remote machines I have to use. VSCode/Codium … not so much. I’d have to run it with remote X and … that is painful when doing long-distance stuff.

5·1 year ago

5·1 year agoAs the old idiom goes: the devil is in the details. And there’s a looooooooooooooooooooooooooooooooot of devilry in 汉字.

91·1 year ago

91·1 year agoThat wasn’t my point.

My point was he was waxing rhapsodic about how cunning it was that the Japanese combined Kanji when they basically just used (a subset of) Chinese writing where that combined Hanzi has been a feature for literally thousands of years (in various forms).

12·1 year ago

12·1 year agoThe emphasis on Kanji composition is hilarious to me since that’s Chinese and in Chinese it’s got all the dials turned to 11.

That is the very core of my objection. He hasn’t identified the warrants for his argument, meaning his argument is literally gibberish to people working from a different set of warrants. Dudebro here could learn a thing or two from Toulmin.

This is a problem endemic to techbros writing about tech. They assume, quite incorrectly, that the entire world is just clones of themselves perhaps a little bit behind on the learning curve. (It never occurs, naturally, that others might be ahead of them on the learning curve or *gasp!* that there may be more than one curve! That would be silly!)

So they write without establishing their warrants. (Hell, they often write without bothering to define their terms, because “trace” means the same thing in all forms of computer technology, amirite?!) They write as if they have The Answer instead of merely a possible answer in a limited set of circumstance (which they fail to identify). And they write as if they’re on the top of the learning heap instead of, as is statistically far more likely, somewhere in the middle.

Which makes it funny when he sings the praises of a tracing library that, when I investigated it briefly, made me choke with laughter at just how painfully ineffective it is compared to tools I’ve used in the past; specifically Erlang’s tracing tools. The library he’s text-wanking to is pitifully weak compared to what comes out of the box in an Erlang environment. You have to manually insert tracing calls (error-prone, tedious, obfuscatory) for example. Whatever you don’t decide to trace in advance can’t be traced. Whereas Erlang’s tracing system (and, presumably Ruby-on-BEAM’s, a.k.a. Elixir) lets you make ad hoc tracing calls on live systems as they’re executing. This means you can trace a live system as it’s fucking up without having to be a precognitive psychic when coding, leaving the costs of tracing at 0 until such a time as you genuinely need them.

So he doesn’t identify his warrants, he writes as if he has the One True Answer, he assumes all programming forms use the same jargon in the same way, and he acts as if he’s the guru sharing his wisdom when he’s actually way behind the curve on the very tech he’s pitching.

He is a, in a word, programmer.