Nitter thread from Julio Merino on application responsiveness in early 2000’s Windows computers versus modern Windows computers. Videos available in linked thread.

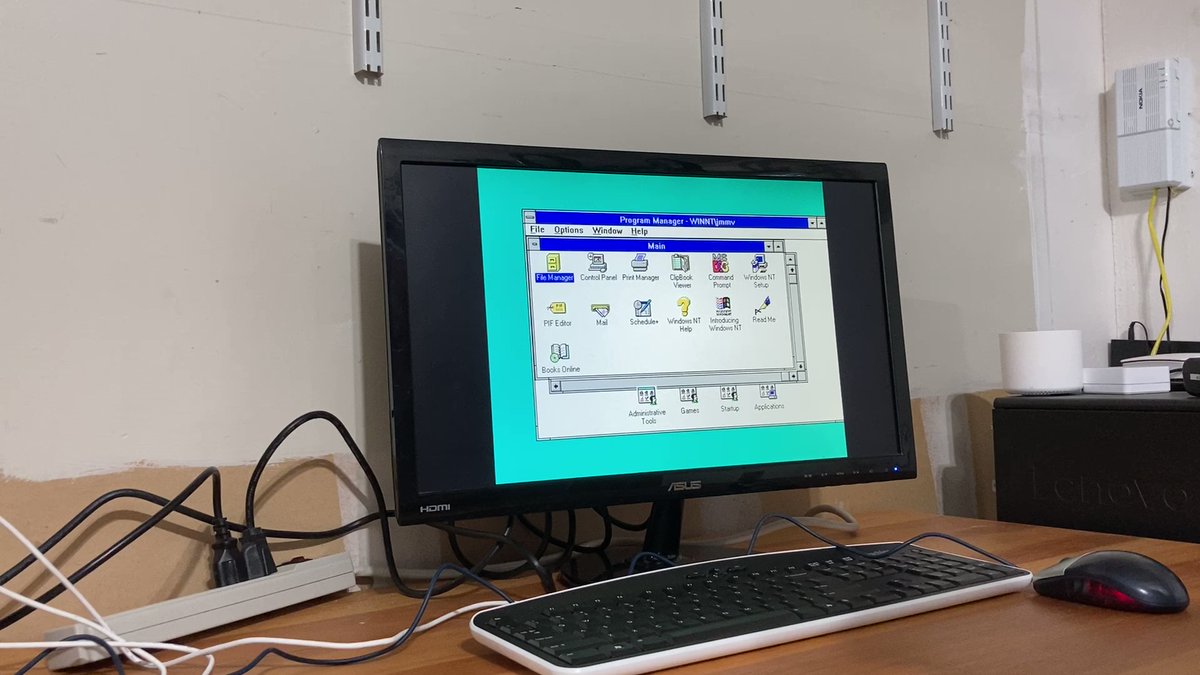

Please remind me how we are moving forward. In this video, a machine from the year ~2000 (600MHz, 128MB RAM, spinning-rust hard disk) running Windows NT 3.51. Note how incredibly snappy opening apps is.

Now look at opening the same apps on Windows 11 on a Surface Go 2 (quad-core i5 processor at 2.4GHz, 8GB RAM, SSD). Everything is super sluggish.

For those thinking that the comparison was unfair, here is Windows 2000 on the same 600MHz machine. Both are from the same year, 1999. Note how the immediacy is still exactly the same and hadn’t been ruined yet.

I recently set up a Windows 2000 computer with a 7W Via Eden CPU that scores 82 on Passmark, compared to about 46,000 for my main computer’s 5950x. The Windows 2000 PC has an old IDE HDD and 512MB RAM. Although this computer couldn’t run any remotely recent OS (even Antix Linux brought it to its knees), it flies along with Windows 2000 and feels just as fast for everyday things as new PCs do. I put MS Office 97 on there and I use it for distraction-free writing since it’s not networked.

The problem is that we’re being given vast amounts of CPU cycles and RAM, but our operating systems are taking them away again before the user gets a say. Windows 11 does so much in the background that it no longer feels like I am in control of the computer. I get to use it when it doesn’t have anything more important that Microsoft wants it to do. Linux is still better, but there’s a whole lot more going on in the background of a typical system then there used to be.

It’s not just the operating systems, it’s also the way software is developed now. Those old windows applications were probably written in C++, which is only lightly abstracted over C, which is about as close as you’re going to get to machine code without going into Assembly.

These days, you might have several layers of abstraction before you get to the assembly level. And those abstractions are probably also abstracted by third party libraries which might be chained to even more libraries, causing even more code to need to load and run. Then all of that might not ultimately even be machine code, it might be in a language like C# or Java where they’re in an intermediate language that needs to be JIT compiled by a runtime, which also needs to be loaded and ran, before it can be executed. Then, that application might provide another layer of abstraction and run something in a browser-like instance, ala anything Electron based.

deleted by creator

Are or were you able to compare it to SublimeText and UltraEdit by chance?

deleted by creator

Good to know, because UltraEdit has been my goto editor for large files so far. Especially large “single line” files (length delimited data files for example). So I probably don’t need to look for an alternative then.

Btw Sublime is cross platform. Even the license is cross platform. Buy once and use on every Windows, Linux and Mac machine you use. I find it much much snappier than VScode. But for large files it can’t beat UE (and therefore emeditor). But for most editing tasks the UX beats the rest, IMO.

deleted by creator

Have you tried Geany, by any chance? I recall it being pretty quick back in the days of single-core machines being the norm, but I’m curious how it stacks up now in terms of snappiness.

In dealing with large files or in general?

Edits: I overgeneralized ignorantly and stand corrected.

That’s a good point.

No abstraction is performance-neutral;Many abstractions are not performance-neutralthey allbut have some scenarios where they perform fast and others where they are slow. We’re witnessing the accumulation of hundreds of abstractions that may be poorly optimized or used for purposes outside of their optimal performance zones.That’s not true. Zero-cost abstractions are a key feature of C++ and Rust. For example, Rust

Option<&T>compiles down to nothing more than a potentially-null pointer.